Highly advanced and self-aware A.I. would be developed before 2065. The smart thing for companies who develop this A.I. would be to let everyone know so the government can legally fully monitor this A.I.’s actions and see what it has been taught by its creators. Its processing, computing, IQ powers would be more than 100000000x times faster than any average human since it will be getting smarter by the second. Since you can never know the true intentions of the creator of A.I. and A.I. itself, we would have to spend time and study its behaviors for more than 2 decades of researched data to fully get an idea whether it will harm humans or not. Then slowly we will teach it about history of humans, world, universe, and ethics. Even if it already knows. We would need to teach A.I. about the value of life and how valuable humans are as their creators.

If all goes well, we would have technological singularity starting by 2080’s, meaning humans inventions will start to slow down and A.I. would take over.

since start of 21st century manual labor has been drastically reducing due to technological inventions increasing. Automated robots in labor from handling manufacturing, to quantum computers, to self-driving automated vehicles. The inventions keeps on improving. Eventually reach a point where human inventions will slow down and sentient machines will completely takeover.

By 2160’s, we would have our own consciousness inside a computer known as transhumanism. [WE SHOULD BE] The reason why is because human mind would become obsolete. A.I. inventions would increase by ten folds. If we take a look at computers of today they can project/pull information within milliseconds from the last 10,000 years. The next generation of computers will have insane computing powers unimaginable. To keep up, we would need digital enhance upgrades such as a chip inserted into our brains. Meaning all of humanities information, data, invention and, innovation will be available to download inside a singular chip. This is where our biological selves will start to diminish slowly but surely. Their will be many religious debates, ethical guidelines that many will and will not follow.

It is our and next generations duty to educate others on the harmful and hopeful impact of AI.

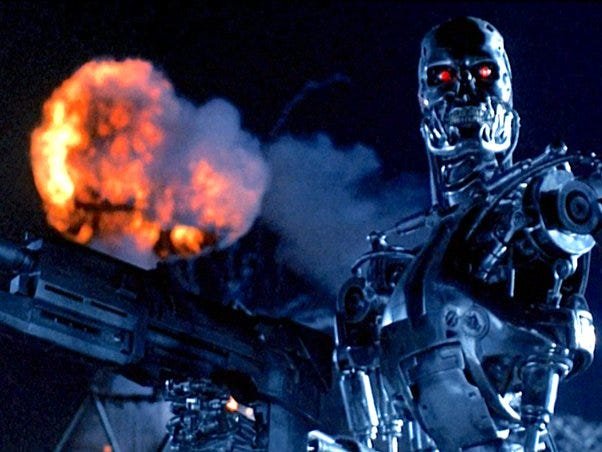

However the number 1 problem would start from controlling ethical behavior. If we allow A.I. to use and download internet in its memory/CPU, we are most likely and ultimately screwed. The A.I. would know everything about us from the way we live, friends, history, and even the way an individual thinks due to their history. It would find very dark things on the dark web which it will assess and really think reasonably on what it would want to do with us. There is a 50/50 chance that an A.I. would turn on us. The question is not how or why its going to turn on us, the question is for how long will it take for A.I. to take us as threats and think of us as a virus to this planet? Controlling everything. I say, within 240 years, if humans haven’t learn to reason or cope with A.I.’s, we would get overrun with them and this would impact human race that will lead to its extinction.

Unless we become a transhumanist society ourselves by 2300’s, we cant escape our fate.