The concept of modern artificial intelligence can be dated back to its roots to the classical philosophers of Greece. The idea of objects coming to life as an intelligent being has been around for a long time. Many ancient Greek and African civilizations had their set of beliefs and built simple structures and machines that can-do simple tasks such as automatons. Ancient Greek civilizations created manuscripts that talked about objects coming to life through beliefs of gods and their powers (Lewis, 2014). Ancient African civilizations created hieroglyphics of machines with human-like abilities (Foote, 2016). These beliefs have inspired attempts to create similar human-like figures with special powers and abilities to develop systems of intelligence that extend the mind. Philosophers such as Rene Descartes from 17th century asked fundamental questions concerning consciousness, intentionality, and intelligence. Descartes believed that mind and body are separate components that distinguish an intelligent being from an unintelligent being and what separates a machine from a human (known as mind-body dualism). Descartes was also interested in proposing and answering questions about mechanical bodily functions and organic life (Aydede & Guzeldere, 2000). By giving life to inorganic structures, allowed humans to discover and place themselves in the world. These theories have driven the concept of artificial intelligence to where it is today. This article will analyze the overview of artificial intelligence in research development and its applications.

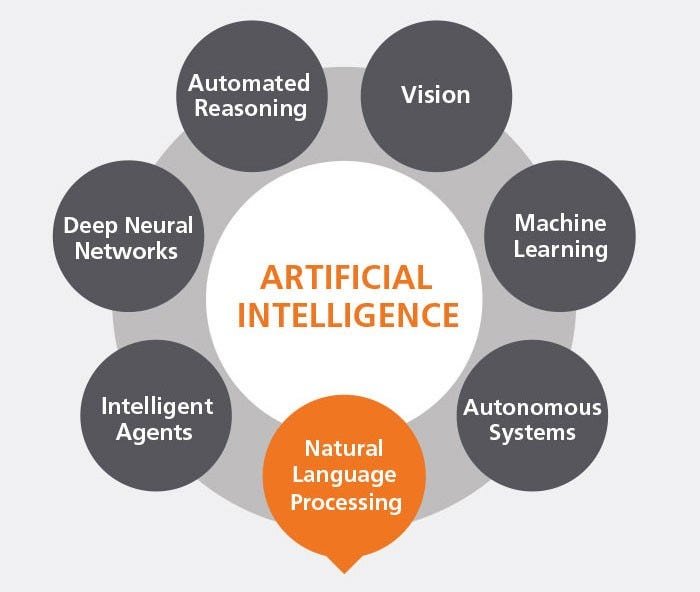

The field of Artificial Intelligence was founded in 1956 at Dartmouth College, in Hanover New Hampshire, where the word “artificial intelligence†was officially invented (Lewis, 2014). The development and meaning behind Artificial Intelligence have had a profound effect and changes throughout the decades. As the decades went by, the definition of A.I. kept evolving. Artificial Intelligence today means to build a machine that can perform on its own or typically requires human assistance to work on a complex task/application for automated interfaces such as visual perception, speech recognition, translation of different languages, and decision-making. The world of twenty-first century, have benefitted from complex development of artificial intelligence systems. This includes receiving spam free emails in our inboxes, smartphones that uses facial recognition, self-driving cars, mapping out DNA sequences, all these examples use from complex programming to even more complex algorithms of Artificial Intelligence. AI today is more complex than it ever was before; it will start to become more dominant and complex in research development in the coming decades.

Due to the “information age†which started due to the rise of computers, internet, and network communication which allowed instantaneous access to exchange information using World Wide Web globally, has caused the development of artificial intelligence to grow at an increasing pace and the need for demand in research development and applications has grown exponentially. We must first understand the concept of (IoT) Internet of Things which means any object/device that can be discovered on the internet globally and be queried. IoT started by combining artificial intelligence systems into enabling big data applications, deep learning, knowledge discovery, neural networks, machine learning algorithm’s, and various other technologies communicating simultaneously. Virtualization and cloud computing have given immense opportunities to grow complex systems of artificial intelligence by storing and retrieving information through online integrated infrastructures servers that is accessible from anywhere in the world and physical data stored in multiple datacenter servers distributed throughout the world for quick access of information. Since artificial intelligence is becoming more complex and smarter, Internet of Things (IoT) is now being integrated into (IoIT) “Internet of Intelligent Things†this allows the internet to communicate efficiently between simulated artificial networks to Internet of Things. This is how machines are becoming more complex as well as its functions are getting more intricate. This is the next step of evolution from Internet of Things to building intelligent systems capable of handling complex tasks at a higher level with minimal human intervention.

Artificial Intelligence is set to influence every aspect of our lives in the near future. According to IBM report, which was published in 2019, automation in organized tasks in labor and production is going to completely get taken over by complex systems of machine capable of handling from simple to complex tasks. This will include all major parts of labor in agriculture, manufacture, transportation, retail, and security. “The most useful aspect of A.I. is the fact that it can minimize the occurrences of human error†(Aslett & Curtis, 2019). Data management is a huge problem many corporations face that slows down their workflow process and productivity in an infrastructure. Due to the striking rise of vast amounts of data content over the last decade, most companies today are focusing on creating A.I. that can outperform even further complex automation.

According to a scholarly journal, A study of quantum information and quantum computers: Oriental Journal of Chemistry Artificial intelligence usually involves both a software system and hardware system integration. The development of AI is mostly concerned with algorithms. A concept framework for AI executing algorithms is known as artificial neural network (ANN). “This allows the ability to mimic the human brain through interconnected network of neurons. One neuron can react to other neighboring neurons and create a vast network of neurons which is then able to change its state according to its divergent inputs from the environment†(Mughaz, Bouhnik, 2020). Researchers are working on applications that build off existing knowledge of algorithms into more complex systems. The researchers have created neural networks that can achieve supervised learning. This process involves where a task is to collect information of a functions that can map input to an output and vice versa. The Neural network can also learn and test data that has not been labeled or classified. It reacts by checking existing and non-existing data and then it can identify common features of new data (Mughaz, Bouhnik, 2020). The advancement of computational power has allowed neural networks to learn more complex systems and evolve overtime just like the human brain and learn on its own. The technical term for this is known as “deep learning†through which systems can learn, identify patterns, and make decisions with minimum amount of human intervention.

Quantum computers exist today and are owned by tech giant companies such as Google, IBM, Accenture, and Baidu however, they are less powerful at 53 qubits and more restrictive in their usage compared to the ones that will operate by over 100+ qubits within the coming decades. The most important and significant part of artificial intelligence will be the advance development of quantum computing. The integral areas for quantum computing are complex systems of artificial intelligence that will use algorithm to rely on processing huge amounts of complex datasets. According to a book published by Seiki Akama, Elements of Quantum Computing, the invention of super-advanced intelligent quantum computers in the coming decades is bound to happen. Advanced quantum computing is going to change the world. The growing interest of developing quantum computer has been in public talks for more than a decade. It has attracted attention in the scientific and technical communities. Various agencies and popular presses are funding the research and development of super advanced quantum computing by programming systems of artificial intelligence algorithms (Akama, 2014). To understand quantum computing, we must first understand the concept of quantum mechanics and its important relation to quantum computers. Quantum mechanics is a physical property of nature in physics where scale of atoms and subatomic particle phenomena occurs at a micro level (Haug & Hoff, 2017). Quantum computing uses quantum mechanical properties to operate. Today’s quantum, classical and supercomputers are nowhere near the power of future quantum computers. Many technology-based companies are investing time, money, and research in developing this type of supercomputer. Advanced Quantum computing will allow the need for better ability to learn, reason, and understand by evolving intelligent complex system of algorithms (Farahmand, Heydari, 2014). The ability to solve a problem in less than a day otherwise would take longer than the lifetime of the universe would be transformative. It will crack the strongest encryptions and cryptography of data within seconds whereas, regular classical computer would take days, months, and years (Farahmand, Heydari, 2014). Discovering new quantum algorithms is a growing active area of research among the help of scientists, engineers, and physicists. The invention of super quantum computers opens vast opportunities to progress and advance artificial intelligence technology in almost every sector.

Information about Courses, Jobs, and importance of Data Science in the 21st century.

The field of Artificial Intelligence is very diverse and broad because there are so many careers that branch out and differentiate. Most demanding and technical careers in artificial intelligence is in Data Science. According to Grey Journal article, data science is 21st centuries most lucrative careers comprising of high salaries as well as high demand for experienced workers. Data science is a disciplinary field of studying and developing data through scientific methods. “This involves developing methods of storing, retrieving, and analyzing data to extract useful information that includes structural and unstructured data†(Savić, 2019). An application of artificial intelligence is machine learning where data scientists build and provide the system’s ability to learn and improve upon experience by building simple to complex algorithms. Machine learning development is one of the most difficult skills to learn and master. Deep learning is creating structured algorithm by layers to create its own network of intelligent systems that can learn and make decisions by itself. Companies are having a very difficult time due to the lack of finding the correct talent to drive A.I. adaptation. The help of computer science programmers, data engineers, and data scientists are needed to collaborate and develop intelligent applications tasked to do a certain task or operation efficiently.

The biggest challenge for investing in AI is developing machine learning and deep learning applications. Higher level of math skills/courses are required to get into a data science field successfully. Linear algebra is a required math skill for machine learning that comprises of data sets represented as a matrix and vectors for fundamental knowledge of math skills. Statistics and probability courses are important for data processing, model evaluation, data imputation, calculating percentages, and visualization of features that include calculating the Mean, Median, Mode, Central Limit Theorem, Regression Analysis, Correlation Coefficient, p-value, standard deviation, Covariance Matrix, A/B testing etc. (Tayo, 2020). Python is regarded as one of the best and most popular coding / machine learning language. Python is taught in most graduate schools for Data Science major. For a machine learning model, multivariable calculus is needed for predictors and other features. A person needs to be familiar with multivariable calculus topics such as Derivatives and gradients, differential equations, Cost Function, Sigmoid Function, minimum and maximum values of a function etc. (Tayo, 2020). Optimization methods are important to learn predictive modeling that minimize an objectives functions by applying weights to the testing data to obtain the predicted labels of functions (Tayo, 2020). For college students, taking minimum of seven to eight math courses are required to achieve fundamental big data analytical skills. A person must be interested and comfortable in solving mathematical models and patterns with proper evaluations to be successful in this field since it is very challenging and demanding.

Responsibilities and roles for research and developing A.I.

Careers in research and development of A.I., in computer Science, Information Technology, and Business fields are growing rapidly and merging. Many jobs today require multiple knowledge, experience, and expertise in these areas to become a successful candidate in job hiring process. An AI engineer with help of machine learning techniques, such as neural networks, help build models to increase functions of AI-based applications. This can involve building from the ground up of language translation, advertising preferences, visual identification, and innovations in technologies such as smartphones. Data scientists use big-data analytics to build and extract valuable data to interpret and gain valuable insight for future predictions such as cloud computing, data mining, machine learning. Data scientist help identify business or engineering problems and reveal useful information about them. The demand for research and development of A.I. is increasing at such an incredible rate that the job environment is becoming very competitive at selecting knowledgeable candidates. IBM predicted that the demand for data scientists will increase about 28% by 2020 (Aslett & Curtis, 2019). The rapid adoption of cloud computing and IoT technologies have enabled more research and development for AI across businesses globally.

Data management is effectively having a data strategy with reliable methods to store, manage, govern, and prepare data for analyzation. Data management jobs are projected to grow by 6 million job openings in 2029 (Aslett & Curtis, 2019). Since machines can learn from digesting messy raw data faster than a human, a “data labeler†is likely to become a new bluecollar job in near future. This job would involve a data labeler to manually clean and sort data before feeding it into the system. They will spend their whole day identifying new pieces relevant topics that are specialized for their job requirements. Data governance is important when handling AI because it is effectively using and acknowledging enterprise data standards and policies of control data usage to handle availability, usability, and security for data. The main purpose of data governance is to ensure the data handled is trustworthy and not misused. This is going to be a big career in the future and is expected to grow by 18% with $5 billion by 2025 (Aslett & Curtis, 2019).

Long-term research development in investments of A.I.

Businesses are adopting to AI methodologies for efficient workflow. Most companies today are implementing artificial intelligence to automate common, basic, or repetitive tasks. The involvement of these tasks includes recording and transcribing meeting in minutes, coordinating schedules, optimizing inventory levels and sales forecasts, data consolidation and basic trend analyses, and monitoring of productivity analyses (West & Allen, 2020). Almost every business today can improve productivity levels using AI powered applications. According to IBM’s July 2019 published report, Accelerating AI with Data Management; Accelerating Data Management with AI, analyzes reports of how machine learning will transform the world by reshaping how people, work, study, travel, govern, consume and pursue activities through the applications of artificial intelligence. The report also talked about how there is “40% significant barrier to using machine learning for not enough skilled resources to handle data management today†(Aslett & Curtis, 2019).

Concepts of AI originated from private sectors but due to the growth of the field in research communities, it largely depends on public investments. The leading countries investing in AI research development are China, Japan, United States, Germany, Canada, and United Kingdom. The companies in these countries are investing tremendous amounts of money for research and developments of more intelligent machine learning algorithms for all major industry sectors that can identify useful information hidden within big data to assess, identify, or evaluate a certain result or outcome. Private companies are seeking out various investors to fund them with on-going research of training and testing their products. It takes many tests to program and teach the algorithm what to do efficiently. Companies have reportedly profited from return on investments in AI. The research in AI is usually done in a safe-enclosed lab environment with several data scientists, data engineers, and other industry professionals who are working for expert judgements and knowledge. Innovation is important to improve and evolve AI within cost-effective budget.

Fundamental long research is needed to understand and make complex datasets learn from algorithms to identify a pattern. Reading data from millions of lines of existing code, building mathematical models with correct evaluation procedures are needed to set correctly before initiating the algorithm that interconnects the ability to identify patterns. Shorter terms for developing AI are also used in development of fully operational systems, depending on the number of tests and improvement of the final product, before entering the market. Massive venture and capitalist investments in China are causing job growth and shift in job demands. The Chinese technology companies such as Baidu, who is one of the most popular search engine providing cloud storage, internet tv, news and often known as “Google of Chinaâ€, is investing heavily in AI technology in research and development, to collect digital information in real-time and to process information in software’s that use intelligent recognition abilities (Makhdoom, Dodor, 2018). It will assist in surveillance by detecting any fraudulent behaviors and identify a person within seconds by collected information that already exists within the stored database. This type of technology in AI requires years of time in research and development since it undergoes lots of testing and training procedures for validity.

The scientific goal of AI is to determine ideas about knowledge representation associated with learning, search, rule systems, and be able to explain various sorts of real intelligence. Engineering goal of AI is to solve real world problems and use applications using AI techniques for knowledge representation, learning, rule systems, search etc. (Bullinaria, 2005). The growing area of research today is in robotics, data security, natural language processing, speech processing, evolutionary computation, vision, biomedical information processing, and more (Bullinaria, 2005). It takes several months to years for researching and developing a proper solution to implement or innovate new AI services.

Case Study: Battling Covid-19 with Artificial Intelligence and Big Data

This section will focus on the developments and applications of AI in healthcare sector by analyzing case studies and scholarly published journals in disease diagnostics, living assistance, biomedical data information processing, and medical drug research through big data analytics. The demand of AI is increasing in medicine field at an alarming rate.

Since the outbreak of Covid-19 started in December 2019, scientists from all over the world are looking to develop a cure/treatment for Covid-19 virus which causes shortness of breath/difficulty of breathing, pain in chest, fatigue, nausea, fever, headache, sore throat. Estimated 3 billion people have been affected by the virus due to lockdown, virus prevention, and social distancing. A case study article called, Combat Covid-19 with Artificial Intelligence and Big data, which was publish in May 2020, talks about widespread use of big data tools and applications that help to trace individuals who may, have come into contact with infected people with coronavirus. Some countries and regions have used big data analytics to detect and isolate commonly infected areas throughout the countries. The first to use big data was South Korea for aggressive testing and contact tracing during the 2015 MERS outbreak (Lin & Hou, 2020). Due to Covid-19, South Korea implemented contact-tracing system called Smart Management System (also known as Covid-19 SMS), which uses GPS movements from smartphones, credit card records, security camera footage, and transportation systems to detect anomaly and trace movements of individuals with Covid-19. Any individual who might have been exposed to coronavirus; health officials sends warning notifications to them. The results gained by this tool contained the virus to stop the spread without lockdowns in South Korea (Lin & Hou, 2020).

Shortly after the Covid-19 outbreak in February 2020, China similarly developed a smartphone mini-program called “health barcodeâ€, which replaces original paper-based access permits into digital access of color coding. This assesses individual’s health report, health risks, status, travel reports and destination history combination to retrace individual movements throughout the region. This helps to detect the virus and its infected originations with people who came in close contacts. “The green color barcode indicates individual is not infected, yellow color barcode indicates that the person is new to the city/region that needs to be monitored, and red color barcode indicates the person must be quarantined. It also assesses daily health risk/activities and temperature checks to prevent the outbreak in work environments†(Lin & Hou, 2020). Any person who violates or fails to follow the rules of the color-coding barcode was subjected a fine or even be jailed. As a result, it later reopened the economy and effectively reduced communication through close quarter environments and crowds.

It is critical to develop effective AI tools by promoting data quality, access, and sharing that uses both structured and unstructured data to develop effective AI tools and applications that enable benefit to humans in health sector. The case above is a prime example of how data is critical for delivering and properly insuring for evidence-based health care by developing AI algorithm tasked for doing a function efficiently.

Case Study: Artificial Intelligence for Pharmaceutical Research and Development

An article published by Emerj: The AI Research and Advisory Company, gives insights on how AI might be able to help pharmaceutical companies manufacture newly discovered drugs in a more productive and organized way. “There are various solutions for data extraction, organizing research data from clinical trial of notes, other medical-documents, and vast prescribed data records of medical diagnosis†(Mejia, 2019). There is a software in development today that purportedly analyzes data from images of drug compounds at the molecular level called I2E. The software application I2E is a text mining application for data extraction and analysis of information. This will allow for faster and accurate predictions by achieving how the drug may react when manufacturers try to turn it into a pill, a liquid medicine, or topical salve (Mejia, 2019). A data scientist and a pharmacist will be able to find out if the drug is likely to break down or even become less useful depending on ingredients used for processing into types of products before the pharmaceutical company actually takes that risk.

Using Natural language processing for drug discovery, this will search and identify thoroughly of databases with unstructured or structured data such as digitized past clinical trial reports and identify if the drug is useful/effective or not. Being able to predict how certain molecules react with each other will ultimately provide us the result we need to determine the effectiveness of a drug treating a disease (Mejia, 2019). Most big software vendors are investing big chunk of money on research and development for this type of big-data analytics software.

Predictive analytics for drug discovery will allow scientists and pharmacists to isolate any specific molecule to test its effectiveness of treating diseases that they are working to cure. This will make pharmaceutical companies compare the success or failure of past molecules to determine how a current drug is intended to use. It will allow to give better insights and understandings of a molecules potential commercial value in the marketplace. “The use of quantum physics and chemistry is in development to aid in analyzing the data as accurately as possible regarding microscopic substances†(Mejia, 2019). A certain type of crystal structure can be determined by a chemical reaction, a compound that can become bonded with hydrogen atoms, and density of atoms it is associated with (Mejia, 2019).

AI for salt and polymorph testing; it is important to determine a drugs level of solubility with water and other mixed liquids. This can help in determining how long the drug will take to expire in room temperatures and in extreme environments. “Pharmaceutical companies using machine learning and AI applications will facilitate this process on multiple different levels†(Mejia, 2019). Predicting after-results of a drug without taking it can tremendously change the pharmaceutical industry and marketplace forever.

A data scientist using machine learning will be able to augment the drug before it even begins processing; it will skip the initial steps for research planned to take because the answers will already be existed within the database (Mejia, 2019). It is a very difficult task to accomplish this because without a true expert in data science or information about different types of drugs in pharmacy sector, it becomes very difficult to build this software efficiently. There are several data science consultancy companies looking to build and invest money on this technology. A company known as Tessella is finding solutions for drug discovery and manufacturing. They also claim to be teaming and helping other companies in achieving this goal effectively.

In healthcare sector, workflow integration is important for any technical, cognitive, environmental, social, and political factors with incentives impacting integration of AI in health sector. Promoting educational programs to inform clinicians about AI/machine learning practices to develop a coherent workforce that understands and abides by the rules and laws of proper care is important when dealing with patients. Research and development of AI in pharmaceutical companies are going to continually grow onto the next century.

Case Study: Data Mining in Healthcare of Arusha Region

A case study journal published in 2013 called, Overview Applications of Data Mining In Health Care, talks about a region in Tanzania, Africa about the importance of bringing in data mining applications into practice that will handle and process large amounts of data which will ultimately reduce cost, waiting times, detect fraudulent behaviors, aid relevant information, and safe treatments used for diagnosis. Data mining is becoming increasingly popular due to several factors that motivate and enable the usage of data mining applications. Data mining can increase and improve decision-making by being able to discover patterns and trends in large sets of complex data and turn it into useful information. The need for clinical and financial data in healthcare organizations has become essential due to financial pressures. The intuition gained from data mining tools can largely influence cost, operating cost, and revenue cost efficiencies while maintaining a high level of care among its customers (Diwani & Sam, 2013). An example of data mining applications used in healthcare would be to help detect fraud and abuse of services by healthcare insurers that is able to gain assistance in making decisions regarding patients’ symptoms and diagnosis as well as gain statistical analyses of data and records within the particular region throughout the world.

For the Arusha region, it will benefit the people due to corruption in the healthcare system. A data mining technology uses knowledge discovery in databases (KDD). The journal talks about how this technology allows healthcare professionals to use different methodologies consisting of problem solving, decision making, planning, analyses, detection, diagnosis, prevention, integration, learning, and innovation. This extracts data for better prediction, forecasting, best decisions taken, and estimation (Diwani & Sam, 2013). This application will help to find and track offenders in healthcare departments. The determination of most costeffective drug compounds for treating a population when compared to a mainstream population can determine the necessary steps taken to ensure understanding side-effects of medicine and diagnosis; These applications can also be used to predict a product and services in healthcare, a customer is likely to purchase.

Case Study: Trust and Confidence in Financial Sector

This section will focus on the development of data security and financial sector of artificial intelligence tools and applications and why it is important for companies to invest time, money, and research for security sector. There is an increasing amount of data breaches occurring every year. To stop and/or prevent data breaches, companies are strengthening their security breaches and loopholes through securing financial data stored in online servers with strong encryptions. AI is helping to block out leakage and limit exposure of data breaches.

A case study article published in 2018 called, Artificial intelligence and augmented intelligence collaboration: regaining trust and confidence in the financial sector, presents thoughts and implementation of AI in retail banking. The case study is on Barclays bank’s adaptation of AI services. Throughout the years, Barclays bank have been keen on establishing themselves as one of the leading investors of AI in UK. Like other banks, their aim is to find the most cost-effective method for adopting AI services to make it easier for customers to access, navigate, and use. Since the cognitive abilities of AI is improving, the amount of data banks keeps and load, such as Barclays data records, are enormous. AI enables data at record speeds and capacities to store information, inform solutions, and be able to expand and provide customers with more immersive and personalized customer experience (Lui & Lamb, 2018). Barclays took its first major step of AI services in 2012 when it launched its first online banking application called “Pingitâ€. The app enabled customers to make a purchase with their mobile devices to social media platforms via Facebook, Twitter, etc. Since then, it has increased overall personalized customer experience.

Barclays started implementing and improving its capabilities in other aspects of online retail banking. One areas Barclays has vested development is seeking to use AI in management of online customer queries, by using advanced chatbots to address customer concerns and queries in an almost instantaneous manner. The use of chatbots and automated calls in customer services already exist today however, advanced intelligent automated chatbots able to answer directly without looking for built-in solutions will soon take place in industries as more companies invest money and research in this technology. Millennials under the age of 40, mostly if not all, use online banking as their most preferred method. Barclays are seeking efficient ways to use AI to replace back office tasks with robots. Barclays have invested in voice recognition technology that greatly improves customer service using telephones and improvements of AI monitoring with customer transactions and fraud preventions. With improved AI feedback services, it can detect unusual behaviors such as money laundering, security threats, and illicit transactions.

Case Study: Controlling Attacks and Mitigation in AI based IoT Systems

Security experts and researchers are training and testing to find solutions that enable artificial intelligent applications to enhance the security of (IoT) devices by predicting/detecting malicious activities within an anomaly. A report published in 2018, analyzes how IoT devices are being implemented into AI applications for security purposes. “Since AI models and the connected objects are highly dependent, an attacker can target the front-end or back-end to breach the device†(Szychter, Daussin, 2018). The attacks targeting the breached connected objects can impact integrity of communicating with the data. The decisions taken by AI could then be falsified by the attacker. Network attacks can intercept and relay data traffic which can jam the traffic system known as Distribution Denial of Service attacks (DDoS). Physical attacks can take place inside a connected electronic object by tampering with hardware and software features to retrieve credentials and encryption keys (Szychter, Daussin, 2018). Engineers will need to gather data from numerous sources. They must be able to clean data that is not effective or usable to build this type of software. Engineers will identify and select the right Machine Learning algorithm for data prevention and security. Next, they will apply the chosen algorithm to implement and program the correct solution. Finally, the AI algorithm must be able to predict important security outcomes for future attacks.

The attackers can get into the system from the core of Machine Learning models by fooling the classifiers generating an adversarial input. By Modifying the input from prediction systems, it can lead to inconsistencies and errors that makes the attacker breach into security devices and steal vulnerable information stored in that device/object. Attacker can also use data poisoning, by injecting false data to corrupt the machine learning system. Ensuring privacy is critical for data security. Companies are investing money to prevent this from happening. Stateof-the-art tools for applications, and software’s with deep learning are being trained and tested to seek out answers for stronger encryptions and counterthreat tools.

In data security sector, privacy is huge concern and companies who invest more money are at a higher advantage and less susceptible of data breach attacks. Since there is a huge shortage of cybersecurity professionals to work on strong encryption to prevent data leaks and tackle proper security incident response system from hackers, it is an important liability for businesses, to implement AI services for faster and efficient protection of data and privacy. Data security breaches is going to increase exponentially, which is why businesses need the help of artificial intelligence to store, prioritize, and strongly encrypt confidential information to prevent devastating financial losses.

Important ethics and legal considerations involved in research and developing A.I.

In 2017, shortly before the death of British physicist Stephen Hawking, warned humanity about the development of artificial intelligence and how it could become “the worst event in human history,†saying without proper ethical, defensive, and protective measures, “we could conceivably be destroyed by it.†In the same year, billionaire entrepreneur Elon Musk, man who started SpaceX, PayPal, and Tesla electric auto company warned, “With artificial intelligence we are summoning the demon.†Mark Zuckerberg, man who started Facebook, addressed with special concerns among the interest of artificial intelligence and how it can possibly wipe out humanity if it were to become sentient and view us as its threat (Center, 2020). The fear of intelligent machines taking over mankind has existed for a long time, depicted in various popular Sci-Fi movie franchises such as Terminator, Blade Runner, Aliens, Matrix, etc. Humans and machines merging is going to become common in the coming decades. It seems though, a harmful machine capable of destroying humans is far a reality than fiction. We would need to consider ethical and legal consideration issues involving the development of fully sentient AI.

The research and development of artificial intelligence which requires extensive research, knowledge, and expertise of merging statistics, linguistics, psychology, philosophy, cognitive science, neuroscience and information science all need typical assistance in development with ethical standards and procedures that shape the use of final product before entering it into mass production. It is important to set clear goals and functions of creating artificial intelligence with proper code of conduct that establishes clear ground and specificity of use with AI services. Any person who violates these terms of operating usage will need to be held liable and be subjected to penalties. Federal agencies must pursue and strengthen public-private partnerships in research and development by increasing investments and expertise to take preliminary actions to subdue cause and effect with misuse of AI services.

AI will advance multitude of healthcare services in the future. Radiology will integrate as a data-driven specialty. Most impacted will be medical practitioners who will use AI tools into clinical practice. Many scientists suggest that the field of radiology will be completely replaced by AI algorithms in the future (Brady & Neri, 2020). Everybody involved in the development of these applications, have an obligation to follow and develop ethical concerns of how AI algorithms are developed, and how they are used. The impact must be for the good of the public, not just for the ill. Any possible ethical dilemmas, abuses, even lawsuits which can arise, deliberately or accidentally, from AI applications in radiology, can cause trouble for the patients. Misuse of data or use of widespread application of AI tools has the cause to potentially harm someone. Many scientists who study in radiology divisions are involved in developing deep learning and AI applications that have disciplines of proper ethical statements and have even suggested codes of ethical behaviors relating to AI, Italian Society of Medical and Interventional Radiology, the Canadian Association of Radiologists, and the French Radiology Community.

An AI-based algorithm in use for medical department such as radiology, requires an extensive access to large volumes of patient data for purpose of testing, training, and validating the programmed algorithms. This type of data must be annotated/labeled correctly, and the overall answer for the algorithms should be expected to arrive as it is intended purpose of function. Any misuse or deviation from attaining correct information can cause harm to a patient and/or a lawsuit. The major issue at-hand is the fact that achieving the correct data and correct imaging to a patient’s problem can be a very labor-intensive task (Brady & Neri, 2020). However, the data first must be available with permissible access restrictions, this usually involves patient imaging studies. The major ethical issue is concerning with terms of ownership, how data is used, and how the privacy protection of this type of data is “truly†protected. Under the General Data Protection Regulation (GDPR), which is an EU law, patients own and control their own sensitive information and/or personal identifiable data, they must also give explicit consent in order to use data for collection, re-use, or sharing.

We would also need to be concerned about companies controlling large data in volumes in both social media platforms and use of medical AI applications. It is practical for an entity to gather data from electronical devices such as smartphones, tablets, internet searches, social media usage, and be able to match healthcare data, allowing for a patient’s identification. This can attract any unwanted attention for personal advertisements from the internet, financial or insurance matters, or even extortion of patients who do not want their information leaked or shown to the public (Brady & Neri, 2020). The ethical consideration in healthcare department needs to be thoroughly established with proper code of conduct and guidelines. Radiologists must be trained in the appropriate use of AI in clinical practice to ensure proper ethical procedures are in place. A radiologist that uses AI will become the preferred choice in medical sector putting another radiologist who does not incorporate AI practices at a disadvantage. The companies involved in controlling and accessing AI services need to be controlled and monitored so harmful or abuse practices or not taken place.

Liability is a big issue in medical sector usage of AI. A doctor is held legally liable for bad outcomes for their patients, however, who will be liable for the bad outcome of the AI in future? Is it going to be the doctor who is using the AI tool to help a patient, the software developer who specifically designed it, or a hospital that purchased it? If a radiologist fails to properly handle, use, or abide by the advice of an AI tool in future, then a bad result occurs due to a patients outcome, the human overriding of AI in court case can result in overrule that the usage of AI is negligent. “The healthcare systems that are deploying AI tools must make sure that the benefits are being delivered to all patients they serve, not just to those who have access to greater financial resources†(Savic, 2019). A major concern would be that the resources will not be evenly distributed due to some countries, regions, or even subgroups of society can be denied access to the potential benefits coming from AI. The fair usage and access of these tools must always be monitored. Conflict of interest can interfere from achieving a fair treatment to all patients which can create unethical and immoral results.

Data privacy is a huge concern and a legal constraint. Personal privacy issues due to leak or exposure of private data is emerging as one of the most significant public policy issues and threats. In the world of financial security, integrity of information is important. The ethical consideration of AI in security department is huge, concerning data sharing, data privacy, data access, surveillance, consent, ownership of health data, evidence of efficacies. Any misuse of these tools of AI can lead to ethical issues such as unfair outcomes, misguidedness, inconclusiveness as a result which can affect a person negatively or abuse its services. As more and more services move online and important tasks are carried out by automated machines, the result would lead to an inevitable job loss ( Lui & Lamb, 2018). As data privacy cases increase, proper engagement and rules must be developed and followed if AI is to ensure there is no tampering or breach of data.

Crucial questions concerning ethics in AI practices:

i. What happens to society after AI takes over human labor?

ii. How can we distribute all wealth created by machines?

iii. How do machines affect our psychological behavior and interaction?

iv. How can we guard against making mistakes?

v. How do we eliminate AI bias in programmed systems?

vi. Security and data leak prevention, how do we keep AI safe from adversaries?

vii. How do we protect individuals against unintended/unforeseen consequences?

viii. How do we stay in control of a complex intelligent system at all time?

ix. What do we do and how do we define the humane treatment of AI? (Lohrmann, 2018)

Discrimination of gender and racial bias using AI can become common which can affect a society in a bigger scale. Imagine a company using a facial recognition and analysis technology that displays a blatant targeting or racially profiling groups of people in segmented areas of work, crime, and gender biases existing with detailed information of percentages and statistics that enables a person to generalize a group. Minorities can become victims of certain tactics based on algorithms that prevent them from getting equal treatment such as a hiring candidate that uses sexist hiring practices, racist criminal procedures and outcomes, false advertising, spreading of rumors, and false information. A development of a lethal operation which allows a human to categorized for a certain action can have major consequences in society if left uncheck and can even create a dystopian society. It is important to look for ethical and moral consequences in AI practices to ensure proper guidelines are always followed. The government would need to strengthen the law and create better policies for people who abuse or violate the terms of service agreement when using AI services. The people need to be aware and concerned about the misuses of privilege and practices taking place, so precautions can be taken.

Conclusion:

The invention of the World Wide Web enabled instantaneous communication of information accessible over the internet from anywhere in the world. This has allowed the advancement of artificial intelligence to progress at an exponential rate in research development. After the invention of the World Wide Web, led to the creation of Internet of Things (IoT) which has evolved the use of applications of artificial intelligence. IoT uses billions of interconnected devices all communicating, sharing, and collecting data. The automation in technology has increased tremendously within the last two decades. The progression of cloud computing services within the last decade has increased the demand and education for jobs in AI services merging different fields of careers such as: science, biology, business, mathematics, computers, and quantum physics fields etc. The research developments in Medical and data privacy sectors will continue to grow on to the next century. Many industries are looking to use and invest AI services for efficient work process.

As processing powers increase in computers, humans will be able to research and develop a complex algorithm that will have computational powers of more than 100+ qubits which is more powerful than all current computers in the world combined. This invention will be known as a true next generation quantum computing and it will change human perception on life altogether. Quantum computers play a vital role in creating AI applications. We will not be able to distinguish between reality and fiction in the coming decades. Learning more about quantum mechanics will enable us to develop more advanced quantum computers that will enable AI applications; it will allow humans to enter simulated virtual/augmented realities. Scientists with the help of quantum computing, will be able to map out the entire observable universe without exploring space, even be able to upload consciousness inside a computer in the future and replace entire biological body with synthetic parts. The possibilities of achieving knowledge with the invention of quantum computers are endless.

In the future there will be a complex development of A.I. programmed with the ability to make decisions best suited to its behavior based off previous actions that will impact our society. This is known as quantum analysis neural network that uses predictive analysis to make and determine best decision to take. A quantum analysis neural network will exactly mimic the human brain that will recognize scattered relationships in a set of data process (Mughaz, Bouhnik, 2020). A neural network can adapt to its changing environment without the need to recreating or redesigning its output. It might even replace jobs that require higher level of functions. The A.I. will play a huge role by impacting other fields such as transportation, nanotechnology, robotics, agriculture, manufacturing etc. More and more companies are investing time, money, and research to make products and software’s intelligent and suitable for efficient and accurate work.

Artificial Intelligence is the future of technology and is impacting virtually every industry. It is changing the way we conduct business. It is bound to become the greatest technological innovator of the 21st century. A.I. supports humans by helping to reduce manual work and enhancing productivity. Soon, it will become a necessity for small businesses in the future to use A.I. and machine learning in almost every aspect. For example, the task of archiving business transactions, product tagging, or listing things in order can take a significant amount of time when it is done with the involvement of human effort but, with fully automated systems of machines capable of handling the most complex functions completely by themselves will give an advantage in every aspect “[AI] is going to change the world more than anything in the history of mankind. More than electricity†(Dr. Lee, 2018, AI oracle and venture capitalist). Likewise, artificial intelligence can also help in deciding a marketing budget or a marketing trend also determine patterns of stock prices, by going over certain statistical data and coming up with its near-accurate insightful predictions. A powerful algorithm that will work behind these triggers will help to identify certain aspects of the world and be able to determine the current market trend accurately or closely as possible. An example of this can include closely predicting fashion industry trend, popular social marketing demands, financial data trend etc.

The progress of artificial intelligence is increasing at an exponential rate since more companies are getting involved by investing in this technology through crowdfunding, mutual funds, and other various types of investments. We can already witness the spread of AI technologies with surprising potential. Virtualization, cloud computing, and IoT devices are speeding up the process of advanced creation of AI services in technology that will do more work than a human, in fraction of the time. One of the applications were applied in healthcare sector where companies are investing in research development of a software which can store patient information, provide virtual assistant, and medical diagnosis to aid clinicians about patients’ symptoms and disease prevention. Second application was applied in security sector, where companies are investing in financial data security and privacy by researching and developing a strong algorithm for intelligent encryption of financial and confidential information. The further the progression of artificial intelligence, the more challenges and concerns arise. While the future of artificial intelligence looks very bright, certain precautionary measures are necessary to take for ethical and moral concerns. Government regulation laws and public policy needs to ensure maximum ethical standards are taken place in use of AI. Overall, AI will impact and fore seemingly change the future of our lifestyle.

This article has provided an overview of artificial intelligence in research development applications. The purpose of this article is to assess AI methodologies being implemented into various sectors and needed requirements for its field of study. The article conveyed what led to the start of AI and its current state of artificial intelligence. It addresses how AI is being implemented in training and testing to achieve the desired results in research development of applications. It focuses on the applications of A.I. being implemented in health and security sectors. Several case studies were analyzed in detail for medical and data security sectors since these will be the biggest advancements that will drive AI for the next century which informs about how the business market is changing due to the shift in demand of artificial intelligence. The article addressed ethical concerns of AI and what precautionary measures must be taken to prevent harm/abuse of AI services. Finally, it addressed what the future holds for AI and what possibilities can arise from its advancements.

References:

Aydede, M., & Guzeldere, G. (2000). Consciousness, intentionality and intelligence: some foundational issues for artificial intelligence. Journal of Experimental & Theoretical Artificial Intelligence, 12(3), 263–277. https://doi-org.libraryproxy.uwp.edu:2443/10.1080/09528130050111437

Basu, K., Sinha, R., Ong, A., & Basu, T. (2020). Artificial intelligence: How is it changing medical sciences and its future? Indian Journal of Dermatology, 65(5), 365–370.https://doiorg.libraryproxy.uwp.edu:2443/10.4103/ijd.IJD_421_20

Brady, A. P., & Neri, E. (2020). Artificial Intelligence in Radiology — Ethical Considerations. Diagnostics (2075- 4418), 10(4), 231. https://doi-org.libraryproxy.uwp.edu:2443/10.3390/diagnostics10040231

Diwani, S., Mishol, S., Kayange, D. S., Machuve, D., & Sam, A. (2013, August). Overview Applications of Data Mining In Health Care: The Case Study of Arusha Region. Retrieved November 10, 2020, from https://d1wqtxts1xzle7.cloudfront.net/31885375/I0382073077.pdf?1379129005=&response-contentdisposition=inline%3B+filename%3DI0382073077.pdf&Expires=1605055522&Signature=EOLf2ktwJxcjkL (case study Arusha region)

Farahmand, Y., Heidarnezhad, Z., Heidarnezhad, F., Muminov, K. K., & Heydari, F. (2014). A study of quantum information and quantum computers. Oriental Journal of Chemistry, 30(2), 601–606. doi: http://dx.doi.org.libraryproxy.uwp.edu:2048/10.13005/ojc/300227

Foote, Keith D. “A Brief History of Artificial Intelligence.†DATAVERSITY, 5 Apr. 2016, http://www.dataversity.net/brief-history-artificial-intelligence/.

Harrison, M. (n.d.). Parkside Library: Off-Campus Access. Retrieved October 29, 2020, from https://link-springer-com.libraryproxy.uwp.edu:2443/article/10.1007/s00779-011-0399-8

Lewis, Tanya. “A Brief History of Artificial Intelligence.†LiveScience, Purch, 4 Dec. 2014, http://www.livescience.com/49007-history-of-artificial-intelligence.html.

Lui, A., & Lamb, G. W. (2018). Artificial intelligence and augmented intelligence collaboration: regaining trust and confidence in the financial sector. Information & Communications Technology Law, 27(3), 267–283. https://doi-org.libraryproxy.uwp.edu:2443/10.1080/13600834.2018.1488659 (case study Barclay bank)

Mughaz, D., Cohen, M., Mejahez, S., Ades, T., & Bouhnik, D. (2020). From an Artificial Neural Network to Teaching. Interdisciplinary Journal of E-Learning & Learning Objects, 16(1), 1–17. https://doi-org.libraryproxy.uwp.edu:2443/10.28945/4586

Savić, D. (2019). Are we ready for the future? Impact of Artificial Intelligence on Grey Literature Management. Grey Journal (TGJ), 15, 7–15.

Akama, S. (2014, May). Elements of Quantum Computing. Retrieved October 22, 2020, from http://mmrc.amss.cas.cn/tlb/201702/W020170224608149203392.pdf

Haug, E., & Hoff, H. (2017, November 10). Stochastic space interval as a link between quantum randomness and macroscopic randomness? Retrieved November 24, 2020, from https://www.sciencedirect.com/science/article/pii/S0378437117310725

Lohrmann, D. (2018, April 15). Privacy, Ethics and Regulation in Our New World of Artificial Intelligence. Retrieved November 08, 2020, from https://www.govtech.com/blogs/lohrmann-on-cybersecurity/privacy-ethics-andregulation-in-our-new-world-of-artificial-intelligence.html

Makhdoom, H. R., Asim, S., & Dodor, A. (2018, June). Empirical Case Study for Artificial Intelligence: Improving the Way of China Industrial Transformation. Retrieved November 25, 2020, from https://pdfs.semanticscholar.org/252d/0a06243fa0299c47291d730f939d67e0ee20.pdf

Szychter, A., Ameur, H., Kung, A., & Daussin, H. (2018, November). The Impact of Artificial Intelligence on Security: A Dual Perspective. Retrieved November 24, 2020, from https://www.cesar-conference.org/wpcontent/uploads/2018/11/articles/C&ESAR_2018_J1-03_ASZYCHTER_Dual_perspective%20_AI_in_Cybersecurity.pdf

Mejia, N. (2019, April 15). Artificial Intelligence for Pharmaceutical Research and Development. Retrieved November 21, 2020, from https://emerj.com/ai-sectoroverviews/artificial-intelligence-pharma-research-development/

Aslett, M., & Curtis, J. (2019, July 19). Accelerating AI with Data Management; Accelerating Data Management with AI. Retrieved October 29, 2020, from https://www.ibm.com/downloads/cas/YD5R1XLB#:~:text=While%20data%20managemen t%20can%20improve,focus%20on%20higher%2Dimpact%20tasks (IBM report)

Gerlick, J. A., & Liozu, S. M. (2020, January 16). Ethical and legal considerations of artificial intelligence and algorithmic decision-making in personalized pricing. Retrieved November 06, 2020, from https://link.springer.com/article/10.1057%2Fs41272-019-00225-2

Center, N. (2020, March 22). AI and Future of the World: Challenges and Perspective. Retrieved October 29, 2020, from https://medium.com/@pr_13157/ai-and-future-of-the-worldchallenges-and-perspective-bdf7de1d3dd4 (used a quote Dr. Lee)

West, D., & Allen, J. (2020, April 28). How artificial intelligence is transforming the world. Retrieved October 29, 2020, from https://www.brookings.edu/research/how-artificialintelligence-is-transforming-the-world/

Lin, L., & Hou, Z. (2020, May 21). Combat COVID-19 with artificial intelligence and big data. Retrieved November 11, 2020, from https://academic.oup.com/jtm/article/27/5/taaa080/5841603 (case study covid-19)

Tayo, B. O., PhD. (2020, August 12). How Much Math do I need in Data Science? Retrieved November 19, 2020, from https://towardsai.net/p/data-science/how-much-math-do-i-needin-data-science-d05d83f8cb19